Introduction

For years, many organizations have relied on SAS for their analytics and reporting workflows. Imagine a data team that has spent years building insights using SAS scripts, models, and reporting pipelines. These systems have powered everything from forecasting and risk analysis to operational reporting. But as data volumes grow, teams often find themselves struggling with longer processing times, rigid infrastructure, and complex maintenance. What once worked well for traditional analytics is now becoming harder to scale.

This growing need for agility is driving enterprises to rethink their analytics foundation and explore Snowflake cloud data platforms. Migrating from SAS to Snowflake enables organizations to move beyond legacy constraints and enhance data processing, faster analytics performance, and seamless integration with modern data and AI ecosystems. In this blog, we explore the key challenges, benefits, and data migration strategy steps for organizations to move from SAS to Snowflake and modernize their analytics environment.

Understanding the Challenges of SAS to Snowflake Migration

Migrating from SAS to Snowflake can be complex. These environments often include large volumes of SAS datasets, legacy ETL workflows, stored procedures, macros, and statistical models that are tightly integrated into business operations. As SAS uses proprietary programming syntax and data formats, it is essential to convert SAS scripts into Snowflake-compatible SQL, Python, or frameworks.

Additionally, enterprises must address challenges related to data mapping, schema transformation, workflow orchestration, and performance optimization within Snowflake’s cloud architecture. Without a structured migration strategy, it risks disrupting critical reporting pipelines, creating data inconsistencies, and operational complexity. This is why enterprises increasingly adopt a phased migration approach that carefully evaluates existing SAS workloads.

Key Benefits of Migrating SAS Workloads to Snowflake

Improved Scalability for Data Processing

One of the primary advantages of migrating to Snowflake is its ability to scale analytics workloads dynamically. Traditional SAS environments often rely on fixed infrastructure, which can create performance bottlenecks when processing large datasets. Snowflake’s cloud-native architecture separates storage and compute resources, allowing organizations to scale computing power independently based on workload demands. This enables enterprises to process massive volumes of data more efficiently and run concurrent analytics workloads.

Significant Cost Optimization

Maintaining SAS environments can be expensive due to licensing costs, on-premises infrastructure requirements, and ongoing maintenance expenses. Migrating to Snowflake allows organizations to transition to a consumption-based pricing model where they only pay for the compute resources they use. Snowflake eliminates the need for managing physical hardware, database administration tasks, and infrastructure upgrades. This shift to a cloud migration plan reduces operational costs.

Enhanced Data Integration

Modern enterprises rely on multiple data sources, including transactional systems, customer platforms, external data providers, and operational applications. SAS environments often operate in silos, making it difficult to integrate data across different systems. Snowflake provides a unified data platform that enables seamless integration with various cloud services, analytics tools, and business intelligence platforms. This enables data engineers, analysts, and business teams to collaborate more effectively.

Faster Analytics and Real-Time Data Insights

Snowflake is designed to support high-performance analytics using distributed computing and advanced query optimization. This architecture allows organizations to execute complex queries on large datasets much faster than traditional SAS environments. As a result, analysts can perform advanced statistical analysis, build predictive models, and generate insights in real time.

Modern Data and AI Ecosystems

Migrating to Snowflake opens the door to a broader ecosystem of modern data technologies and advanced analytics tools. Snowflake integrates with ML frameworks, data science environments, and visualization platforms. This helps to adopt advanced capabilities such as predictive analytics, AI-driven insights, and automated data pipelines. By modernizing their analytics infrastructure, enterprises can empower data teams to build more sophisticated models and deliver deeper insights.

A Strategic Approach to SAS to Snowflake Data Migration

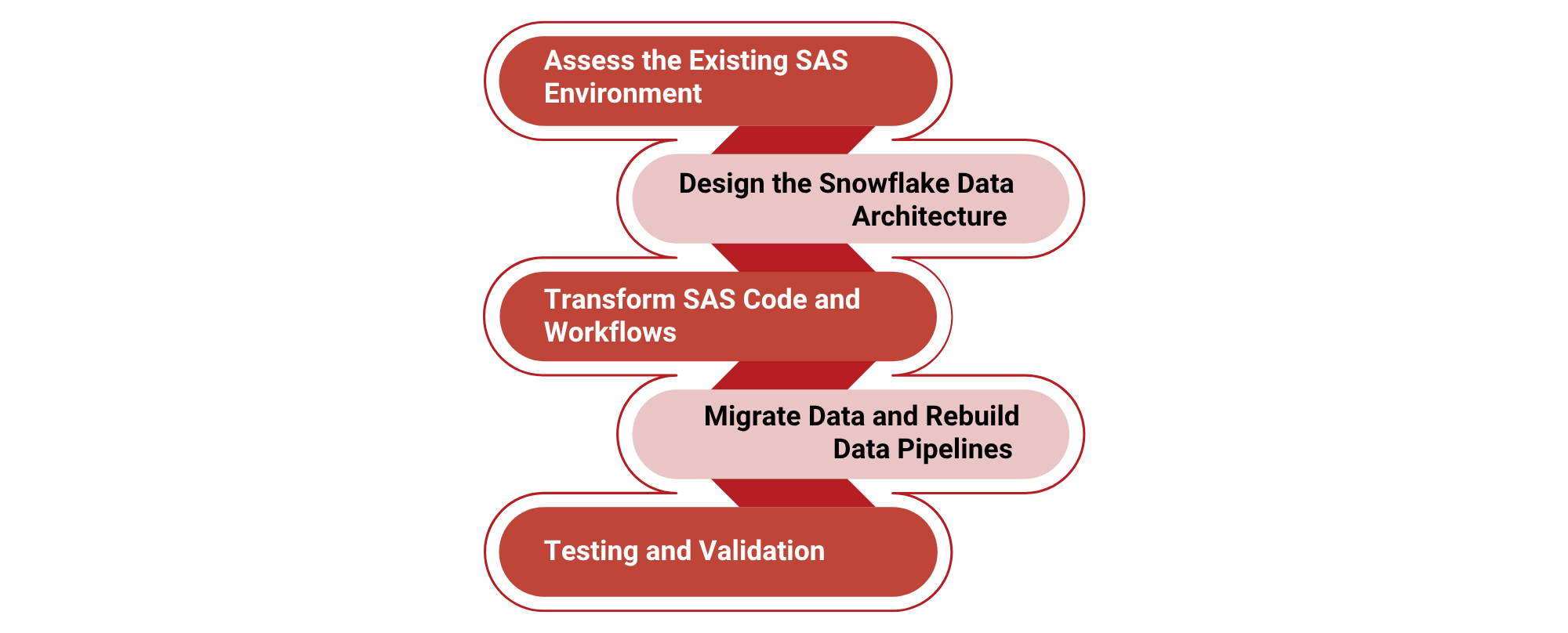

Migrating SAS workloads to Snowflake requires more than simply moving datasets from one platform to another. Enterprises must modernize their analytics pipelines, transform legacy code, and redesign data architectures. A structured migration strategy ensures minimal disruption to business operations while maximizing performance and scalability in the new environment. Below is a five-step strategic framework for migrating SAS analytics workloads to Snowflake.

Assess the Existing SAS Environment and Workload Dependencies

The first step in a successful migration is conducting a comprehensive assessment of the current SAS environment. Organizations must analyze all SAS assets, including datasets, macros, stored procedures, ETL workflows, and reporting pipelines to understand the complexity of the migration. This discovery phase identifies data dependencies, workflow relationships, and redundant processes.

Automated assessment frameworks can scan the SAS environment to generate a complete inventory of code, analytics workflows, and datasets. This allows teams to prioritize critical workloads, identify optimization opportunities, and define a phased migration plan.

Design the Snowflake Data Architecture

Once the current environment is assessed, organizations must design the target Snowflake architecture. This involves defining how SAS datasets will be mapped into Snowflake tables, designing schema structures optimized for Snowflake’s columnar storage, and determining the best approach for migrating data and workloads.

At this stage, organizations choose between different migration strategies such as lift-and-shift, partial modernization, or full transformation of analytics pipelines. Snowflake-native ingestion frameworks like Snowpipe, SnowSQL, and Snowpark help streamline data loading and transformation processes. Proper architecture planning ensures that the migrated environment can support high-performance analytics and secure data access across teams.

Transform SAS Code and Workflows

One of the most technically challenging stages of migration involves converting SAS scripts and analytics logic into Snowflake-compatible frameworks. SAS programs often contain complex constructs like PROC statements, macros, and advanced statistical functions that cannot run directly on Snowflake.

Automated transformation frameworks help convert SAS workloads into different formats like Snowflake SQL, Snowpark Python, or modern transformation tools like dbt (data build tool). Intelligent systems analyze code patterns and automatically convert large portions of SAS logic into equivalent Snowflake queries and pipelines. In many cases, these platforms can reduce manual development time while preserving the original analytical logic.

Extracting Data and Rebuilding Pipelines

After transforming analytics workloads, organizations begin migrating datasets and rebuilding data pipelines in the Snowflake environment. SAS datasets are typically exported into compatible formats like CSV, Parquet, or Avro before being ingested into Snowflake using bulk loading mechanisms.

Snowpipe, Streams, and Tasks allow organizations to automate data ingestion and transformation workflows. These cloud-native pipelines replace traditional SAS ETL jobs and enable organizations to process large datasets more efficiently. Modern data orchestration frameworks also help schedule data migration and maintain real-time data pipelines within Snowflake’s architecture.

Testing and Validation

The final step in the migration process involves validating data accuracy, optimizing query performance, and operationalizing the Snowflake platform for enterprise use. During this stage, organizations compare outputs between SAS and Snowflake to ensure that analytical models, reports, and statistical calculations produce consistent results.

Automated validation frameworks perform row-level comparisons, schema validation, and reconciliation checks to ensure data integrity. Once done with testing and validation, organizations deploy production pipelines, implement CI/CD workflows, and access analytical tools. Operationalization also includes performance tuning, cost optimization, and training teams.

Accelerate Your SAS to Snowflake Transformation with PROLIM Digital

Migrating from SAS to Snowflake demands an effective data migration strategy. As a Snowflake Elite Partner, PROLIM Digital brings deep expertise in modernizing legacy analytics environments and helping enterprises transition to Snowflake’s cloud-native data platform. We support at every stage of the migration journey, starting with a comprehensive assessment of existing SAS workloads and dependencies. We help organizations design a detailed cloud migration plan that aligns with analytics objectives. Adopting advanced migration accelerators and Snowflake frameworks such as Snowpark, Snowpipe, and automated data ingestion pipelines, PROLIM Digital ensures that SAS datasets, scripts, and analytics workflows are efficiently converted into scalable Snowflake solutions.

Conclusion

Migrating from SAS to Snowflake represents a significant step toward modernizing enterprise analytics and building a more scalable, cloud-first data ecosystem. With transitioning SAS workloads to Snowflake, enterprises can gain powerful capabilities. But achieving these benefits requires a well-planned data migration strategy that carefully transforms legacy workflows, preserves analytical accuracy, and optimizes data architectures for the cloud. With the right approach and expertise, organizations establish future-ready analytics using Snowflake.